In April 2026, an attacker compromised one of the most widely used web infrastructure platforms in the world. Vercel hosts production workloads for OpenAI, Cursor, Pinterest, and millions of developers. No zero-day was used. No malware touched an endpoint. No part of Vercel's own code was breached.

The attacker walked through a door that someone inside Vercel had already unlocked. The door was opened by a Vercel employee approving a routine OAuth request. Nobody thought much of it at the time. That is the part of this incident worth dwelling on.

The chain ran on four identities. Not one was abused with malice

A Vercel employee was using Context.ai, an enterprise AI assistant. Context.ai learns company workflows by reading calendar, email, and internal documents. It does this through a Google Workspace OAuth grant. The approval was routine. It happens dozens of times a day inside any modern engineering organization. Context.ai was then breached itself. The attacker now held its persistent access into the employee's Workspace account. From Workspace, they pivoted into Vercel's internal environments. Once inside, they enumerated environment variables.

According to Hudson Rock, in February 2026, two months before Vercel published a single bulletin, a Context.ai employee downloaded Roblox auto-farm scripts. Those scripts delivered a Lumma infostealer. The infected machine handed attackers Google Workspace credentials, along with logins for Supabase, Datadog, and Authkit. The compromised account was support@context.ai, an employee who was also a member of the context-inc Vercel team, with direct access to environment variable settings, project configuration, and deployment logs. The credentials were already circulating. Nobody acted on them. Environment variables are configuration secrets that tell production applications how to connect to databases, payment processors, and third-party APIs. They hold the keys to everything a live system depends on.

Variables that Vercel's tenants had marked sensitive remained encrypted. They were confirmed inaccessible. Variables marked non-sensitive were readable in plain text. The sensitive flag was opt-in. Non-sensitive was the default. Any variable created without deliberately marking it otherwise was readable. Database URLs, webhook secrets, API keys, and auth tokens did not end up exposed through negligence. They end up exposed because the system's default state made them readable, and nobody changed it. Four identity hops. No exploits. The chain was confirmed by Vercel CEO Guillermo Rauch and independently corroborated by security researcher Jaime Blasco hours before the official statement.

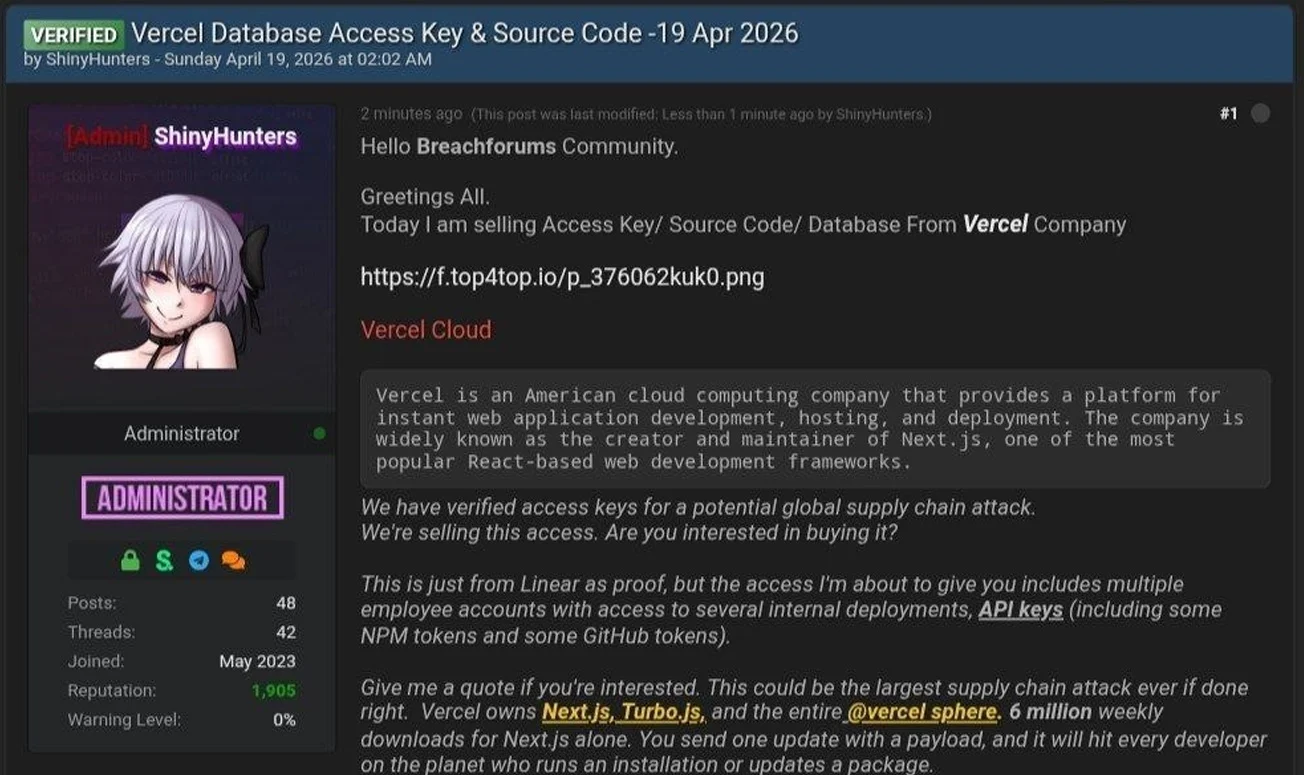

The noise that followed traveled faster than the facts

A threat actor posting under the ShinyHunters name appeared on BreachForums shortly after disclosure. They claimed to be selling Vercel access keys, source code, and npm tokens for $2 million. The post framed this as a potential global supply-chain attack via Next.js, which sees roughly 6 million weekly npm downloads. Those claims remain unverified. Actors historically associated with the ShinyHunters brand have publicly denied involvement. Vercel does not appear on the group's known extortion portal. Rauch confirmed that Next.js, Turbopack, and the rest of Vercel's open-source projects were audited and remain clean.

The pattern matters independently of whether the specific claims hold up. In contemporary incidents, the attacker claims to travel faster than facts. Security teams must make containment decisions amid sustained ambiguity. Often, the internet is still negotiating how serious the breach actually is.

This was not a Vercel failure. It was a structural failure that lives inside almost every organization

Four reinforcing causes keep this attack pattern working.

- AI tools carry enterprise-grade access by design : Context.ai needed broad Google Workspace permissions to do useful work. That meant mail, calendar, drive, and directory scopes. The grant that makes the product valuable is the same one that makes a breach of it catastrophic for the customer. Most AI tools approved in recent years carry this property.

- OAuth grants do not expire by default : The approval is a one-time event. The access is permanent until someone revokes it. Vendors get acquired, change posture, swap dependencies, and ship new integrations. The grant remains unchanged throughout it all. Almost nobody reviews it until something goes wrong.

- Non-human identities are largely invisible to traditional security tooling : API keys, deployed tokens, environment variables, and CI/CD secrets are identities. They authenticate to critical systems. They outnumber human accounts by a wide margin in modern enterprises. Legacy IAM was built around joiners, movers, and leavers. These credentials accumulate unmonitored until an attacker enumerates them.

- Secrets are misclassified as routine matters : A developer creates an environment variable, leaves the sensitivity flag at the default, ships the feature, and moves on. That variable might hold a Stripe key or a production database URL. The attacker did not find a flaw in Vercel's security model. They relied on its predictable failure mode.

The mature security stack had nothing to inspect, because every action was authenticated

If you are wondering why a mature security stack would not have caught this chain, the answer is structural rather than tactical. Perimeter security had nothing to inspect. Every request was authenticated. Every access flowed through a sanctioned channel. No network boundary was crossed in any way perimeter tooling could see. Endpoint detection was equally blind. The employee's laptop may well have been clean throughout. The compromise lived at the SaaS layer: within Context.ai, within Google Workspace, and within Vercel's dashboard. No endpoint agent has visibility into any of those control planes. Traditional IAM tracks human accounts, MFA enforcement, and offboarding hygiene. It does not track an OAuth grant that a developer authorized three months ago. It does not track the API keys generated by your CI/CD pipeline overnight. It does not know that a variable flagged non-sensitive actually holds a production database password.

Infostealer credential monitoring is a fourth blind spot most organizations do not have covered.

According to Hudson Rock, the infection was identifiable as early as February. The attack was operationalized in April. That is a two-month window during which the credentials were live and usable before Vercel knew anything had happened. Most organizations have no process for monitoring whether employee credentials have appeared in stealer logs, even though that data is queryable today through threat intelligence providers. By the time the breach notification arrives, the window has already closed. Identity has become the attack surface. Most security programs still treat it as an access-control checklist.

Three layers. Four hops. Unosecur places a checkpoint at each layer

The Vercel attack involved four identity transitions, and each one should have produced a usable signal:

Credential theft → OAuth grant → Google Workspace account → Vercel environments

At every hop, an under-monitored identity with excessive privilege was used to reach the next layer. Unosecur's Unified Identity Fabric places a checkpoint at each transition.

The AI agent layer. Context.ai was not just a third-party tool. It was an AI agent with privileged access to an employee's entire Workspace. Unosecur treats AI agents and their connected tools as first-class identity principals, governed by Just-in-Time access, Just-Enough Privilege, and session-level token tracking. The same controls that govern a human employee's access apply to every AI agent in the environment. An agent that has not used a permission gets flagged for review.

The NHI layer. OAuth grants, the tokens they produce, API keys, deploy credentials, and environment variables are all non-human identities. They are the same problem at different points in the same lifecycle. The grant is what gets approved. The token is what gets used. The environment variable is where it gets stored. Unosecur inventories all of them continuously across Google Workspace, cloud providers and SaaS platforms. Each is risk-scored on privilege level, rotation age, and access patterns. A variable labeled non-sensitive that holds a production database URL surfaces as a high-risk misconfiguration for prioritized remediation. The specific indicator from this incident is queryable in plain English inside the platform. No specialist syntax. No manual audit cycle.

The Behavior layer. Even with the best access controls, a sophisticated attacker will sometimes get through. That is when behavioral detection matters. Unosecur monitors identity activity continuously against MITRE ATT&CK baselines. The pattern in the Vercel incident is exactly what the ITDR layer is built to catch: an identity reaching outside its normal scope at unusual velocity following an upstream vendor compromise. That detection triggers predefined automated workflows to contain the threat. An analyst can quarantine the identity rapidly rather than file a ticket and wait.

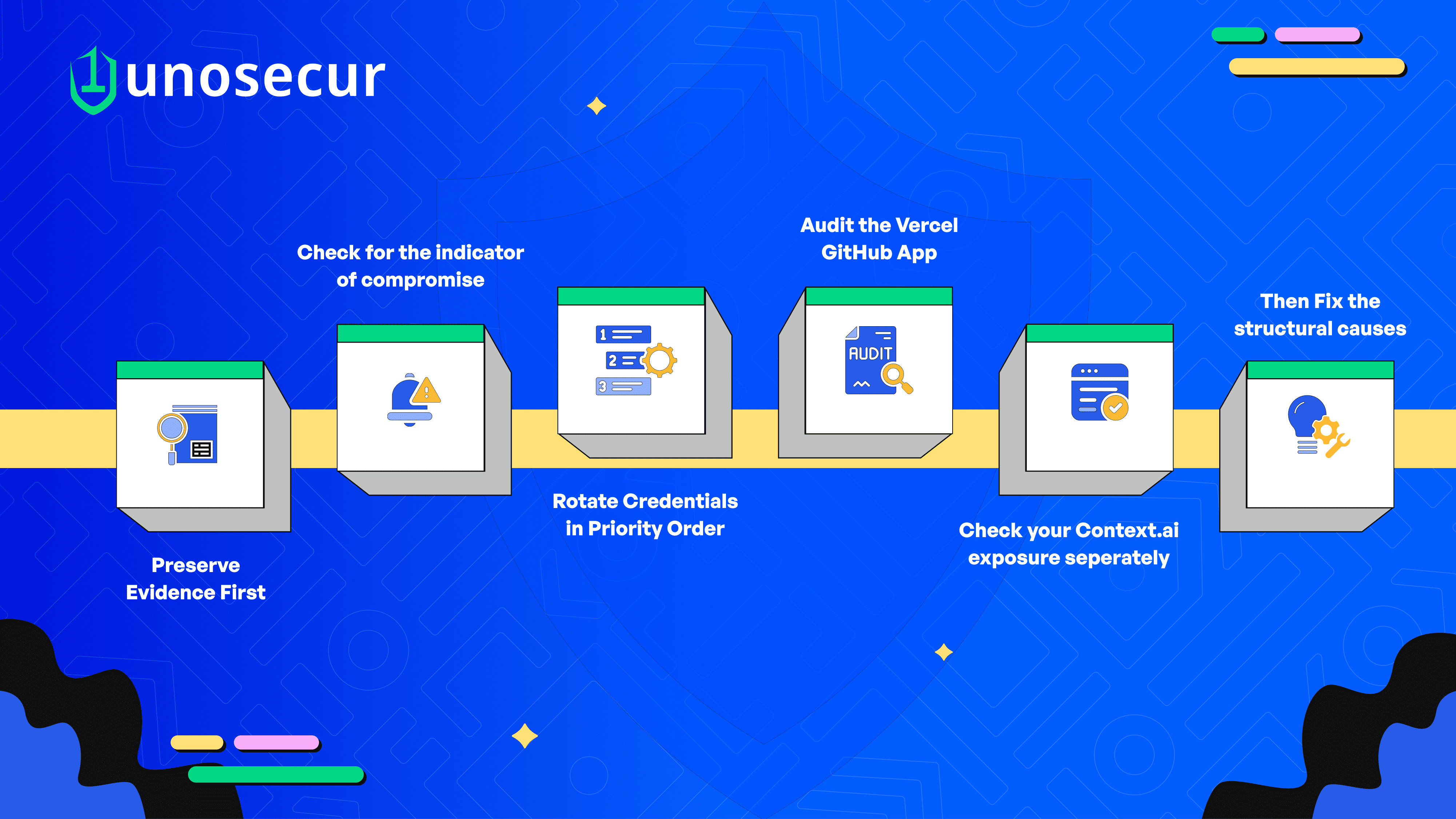

How to ensure remediation of your blast radius within 24 hours?

If your organization runs workloads on Vercel or uses Context.ai independently of Vercel, work through the following in order.

Preserve evidence first. Default audit log retention is short. Any change you make pollutes the record. Export the Vercel audit log and your GitHub organization audit log before touching anything else.

Check for the indicator of compromise. In the Google Workspace Admin Console under Security, Access and data control, API controls, App access control, look for the OAuth client ID 11067145987130f1spbu0hptbs60cb4vsmv79i7bbvqj.ap ps.googleusercontent.com

If it appears, your organization was inside the exposure window. Revoke it and trigger incident response.

Rotate credentials in priority order: GitHub Personal Access Tokens come first. Vercel environment variables touching payments, databases, auth signing, and cloud provider access come next. Redeploy after rotation. Old secrets remain live inside existing deployments until you do. This step gets skipped routinely.

Audit the Vercel GitHub App: Check which repositories it can reach under your GitHub organization settings. If it has organization-wide access, scope it down to the repositories it actually deploys. Review the GitHub audit log from 1 April 2026 forward for new collaborators, OAuth grants, deploy keys, workflow changes, and branch protection modifications.

Check your Context.ai exposure separately: If anyone in your organization authenticated to Context.ai outside the Vercel context, you may have a direct exposure window of your own. Query your identity provider for those authentications. Review Google Workspace OAuth logs for the associated app IDs.

Then fix the structural causes: Mark all secrets sensitive by default. Migrate toward short-lived credentials through GitHub OIDC federation rather than long-lived environment variable keys. Pull your full Google Workspace OAuth application report. Institute a quarterly review with security sign-off required for any grant carrying sensitive scopes.

Vercel will recover. The path the attacker used will not close

Confirmed impact appears bounded, Mandiant is engaged, and the engineering team is competent. The problem is that Vercel is not the last company this will happen to. AI tool adoption is moving faster than any security program can keep up with. OAuth grants accumulate silently inside Google Workspace and other providers. Non-human identities outnumber human identities by orders of magnitude and receive a fraction of the attention. Attackers are becoming more precise at identifying the trust paths that already exist between all of these systems. Many now operate with their own AI-accelerated tooling, as Rauch himself noted.

Security teams will frame this as a supply chain problem. It is also an insider threat problem, not a malicious one, but a behavioral one. A developer downloading software from an untrusted source on a work machine, an employee approving an OAuth app without a security review, and even an administrator granting broad Workspace scopes to a tool that did not need them. None of it was malicious. All of it created the path. Insider threat programs that focus only on bad intent miss the broader category of risk that enabled this breach. The path into Vercel was already there. Nobody built it maliciously. It was built by a developer approving a useful AI tool, by a teammate, creating an environment variable under deadline, and an administrator approving an OAuth scope without dwelling on what it was connected to. That same path is being constructed inside your environment right now. The question is whether you can see it before someone else does.

.avif)

.avif)